Various sources estimate that Excel has between 750 million and 1 billion users worldwide. Odds are that the vast majority of analysts start manipulating data with Excel. Beyond its ubiquity, and from my days of analytics at Microsoft, I have come to appreciate Excel for its versatility, for its expansion capabilities through plugins like this Excel plugin for TURF analysis, for example, and for a robustness that many software products could learn from. Let’s be clear, Excel is here to stay for many more years, with a deeply involved community. Loads of people are using Excel, and it’s easy to understand why. It’s fairly easy to use, however, some people might prefer to get some extra training in to make sure that they know exactly what they are doing. If you are interested in taking some extra classes then you could take a look at something like these excel classes to take in denver. There are loads of different classes out there, you just have to make sure that you find the right one for you. Before you take any class though, make sure that you do some research beforehand to make sure that you get the class you actually want.

I have been using Excel a lot, and I even keep writing on the topic, including in this blog. Yet, I find myself using Excel+ PowerPivot less and less. The first strike was when I started to use Tableau and realized that a stacked bar chart did not have to be so painful and time consuming to produce, as it still is with Excel. The second and near fatal strike came when I started using Alteryx, looking for a way to automate repetitive tasks, without tedious Visual Basic, and most important: without Copy & Paste.

If you have a process that requires Copy & Paste, you should know you have outgrown Excel.

As I moved along the experience curve using Alteryx, I discovered 9 additional benefits, less obvious, that I gathered and will detail in that post:

- Build Once, repeat automatically and reliably

- Modular design for sharing

- Change data type

- Obfuscate easily

- Identify what did not match in a Vlookup or an Index/Match

- Import many sheet

- Union of tables

- Reshape Data

- Increase Data Granularity

- Decrease Data Granularity

1. Build once, repeat automatically

That first benefit of switching to Alteryx is pretty self explanatory: develop workflow once, repeat at will. You can even schedule with the proper infrastructure, with either a scheduler license or a Alteryx Server. and you will also take care of the documentation along the way. Alteryx provides connection progress and count of records processed at every stage for reliable monitoring.

2. Modular design

The benefit of Alteryx workflows, formerly known as modules prior to V10, is that you can easily reuse and combine them, in a almost Object-Oriented way. You can also share datasets by writing one process’ output to a local database of the .yxdb type, and that dataset can then be used by one or many subsequent workflows. You can also use Alteryx Macros, as I demonstrated in that earlier post, to maintain centrally a process in a macro, to be shared with many workflows.

3. Change data type

First things first: by default, Excel cells don’t have a data type! Excel tries to smartly assign itEver had an issue converting an Excel cell from Text to Number or vice versa? a simple Google search shows over 13m pages… Not mentioning when cells from the same columns end up having different formats for mysterious reasons. With the Select tool in Alteryx, just set what the column should be, including a variable type, and you are done. You can even automatically optimize your fields data type as described in this post.

4. Obfuscate your data easily

Should you need to present your models outside your organization, some confidentiality requirements will most likely drive the need to mask some business indicators. This can be done with Excel, using formulas, most likely RANDBETWEEN(), but you will need to have the real data coexist in the spreadsheet, at least during the process, and you might miss scrubbing some parts. You’d be much better masking the data in Alteryx and load only the scrambled data in your model. A previous post describes how easily this can be done, and especially when it comes to repeat the process…

5. Identify what did not match in a Vlookup or an Index/Match

It is one thing to be able to map 2 tables properly in Excel, hopefully by using Index/Match, but another to identify what did not match, the “leftovers” from one side or from the other side. Why you should bother checking those rejects? At the design stage, or even in production, you should monitor the rejects to ensure they are matching what you would expect from what you know of your dataset. This is where you will collect undocumented / unknown cases. Excel would conceal those as it ignores the rejects. Quite unforgiving…

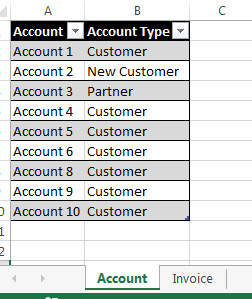

For illustration, suppose you do a lookup of your invoices against the Account Master Table to assign an Account Type. You should expect every account to have an Account Type:

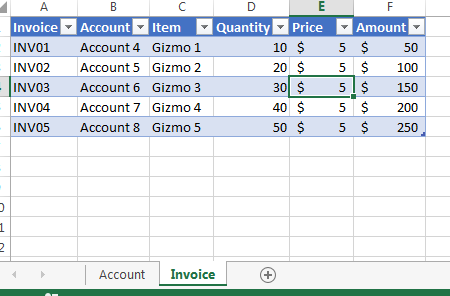

But unbeknown to you, Account 7 is not found in the Account Table. This will be easy to spot in Alteryx when you run the workflow using the Join tool:

But unbeknown to you, Account 7 is not found in the Account Table. This will be easy to spot in Alteryx when you run the workflow using the Join tool:

Whereas an Excel VLOOKUP will get you only 4 matches, circled in blue, Alteryx will draw your attention to the red figures. I would expect the 5 red unmatched, as not every account has an invoice template, but the one red Left join, the Account 7 issue, is unexpected…

Whereas an Excel VLOOKUP will get you only 4 matches, circled in blue, Alteryx will draw your attention to the red figures. I would expect the 5 red unmatched, as not every account has an invoice template, but the one red Left join, the Account 7 issue, is unexpected…

6. Import many sheet

If you ever had to bring data from many sources, such as a series of CSVs broken for size reasons, or by regions, you will recall the task as painful or deprived of any interest… And suppose you need to do it over every week with a fresh new dataset, you should promptly start to curse at Excel, and write some fancy Visual Basic script, which will keep breaking with every source file evolution. There too, Alteryx has you covered, with many different ways to automate the process, depending on your needs:

- http://community.alteryx.com/t5/Data-Sources/Excel-import-Multiple-sheets-with-a-range/td-p/236

- http://community.alteryx.com/t5/Data-Sources/Importing-Multiple-tabs-from-an-excel-file/td-p/826

- http://community.alteryx.com/t5/Alteryx-Knowledge-Base/Input-multiple-Excel-sheets-or-tabs-at-once/ta-p/1243

- http://community.alteryx.com/t5/Alteryx-Knowledge-Base/Reading-in-multiple-files-with-different-Field-Schema/ta-p/13370

- http://community.alteryx.com/t5/Engine-Works-Blog/The-Ultimate-Alteryx-Holiday-gift-of-2015-Read-ALL-Excel-Macro/ba-p/9988This brings us to the next step: once you have smoothly imported those sheet, how to collapse them into one?

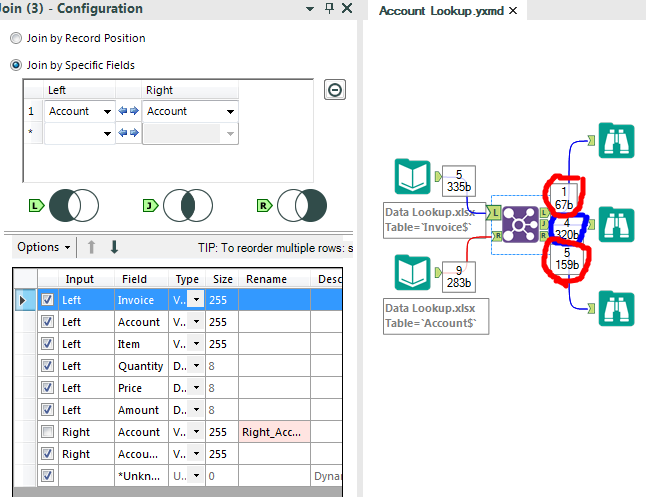

7. Union of tables

Correct me in the comments if I’m wrong, but the only way I know to stack / Union 2 tables in Excel, is to copy the second and paste it at the bottom of the first… Not exactly a desired approach. Even Tableau has spotted the gap and introduced with Version 9.3 a way to perform the Union in Tableau:

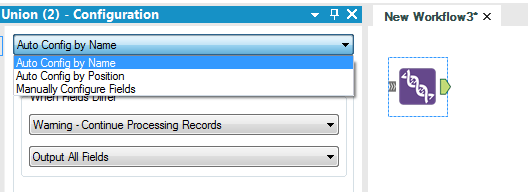

Alteryx has offered a no-brainer Union tool since its very early versions, which offers a variety of options:

8. Reshape Data

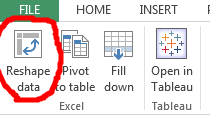

Let’s face it, most data you will obtain in Excel format, is not properly shaped for analysis, not even ready for an Excel Pivot, in most cases. For instance, it has subtotals, headers and more data irrelevant to most BI tools, which need to be scrapped. Furthermore, the table is usually wide instead of being tall, with less columns but more rows. A tall format offers much better performance for aggregation engines, which can offer totals or subtotals on the fly. Therefore, the whole process of preparing the typical Excel shape data into BI tool friendly format is called reshaping. Here is a good field guide published by Tableau, aware of the gap that most Excel users face. Tableau went as far as offering an excellent free addon for Excel to automate some of those tasks:

If you already use Alteryx, the process is even simpler using the Transpose tool:

9. Increase Data Granularity

Sometimes you can be faced with a situation where your dataset is too aggregated for your purpose. For illustration, I need to blend 2 data sources, one has daily transactions and the other one has monthly targets, and I need to present the attainment against target by week. The only way is to break down the target dataset into daily values, that is increasing the granularity from Month to Day, effectively increasing the number of rows by a factor of over 30.

That scenario can be fairly easily tackled and repeated using the Generate Rows tool, as demonstrated in this post. There are many of such padding scenarios that are very difficult to approach with Excel, and quite a breeze with Alteryx. On the flip side, a much more common scenario is to get rid of irrelevant details and Decrease Data granularity:

10. Decrease Data Granularity

Last but not least, there are many reasons to discard irrelevant level of details, for easier processing by users, for increased system processing performance by getting rid of the girth. It could even simply be for storage optimization to increase compatibility for intergration of other software options, such as document management software that can be found by looking at https://www.filecenterdms.com/info-efilecabinet-alternative-and-replacement.html or similar websites that can offer document management solutions to better organize storage of data and digital files once they’ve been optimized correctly.

Why not let the front end BI tool take care of it, with their optimized engines, fancy columnar database and other creative features? Because it’s better to aggregate once upfront while in Alteryx, than tasking the front end tool and taxing its resource for each end user navigation step.

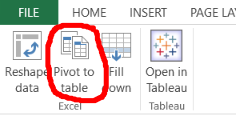

In Excel, the way to proceed is to build a pivot, after reshaping the data to a pivot friendly format, and copy & paste (sigh) the resulting pivot aggregated table into a separate tab. the Tableau Excel add in also assists that last stage with that function:

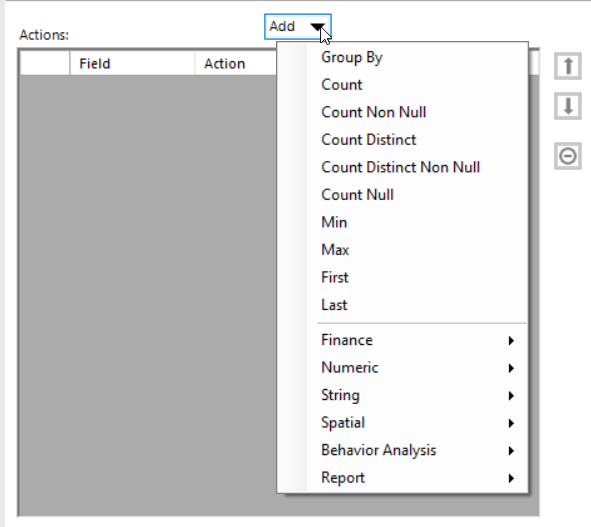

Not elegant, not reliable, not repeatable. A much better approach is to use Alteryx with the Summarize tool:

The tool offers every aggregating function an Excel pivot does and more, such as Count Distinct:

There are situations where calculations are not needed, such as picking a Min/Max/First/Last from a series of transactions, where the Summarize tool can be a bit tedious to configure, and where it becomes much more efficient to deploy my favorite tool combo: Sort + Unique:

There are situations where calculations are not needed, such as picking a Min/Max/First/Last from a series of transactions, where the Summarize tool can be a bit tedious to configure, and where it becomes much more efficient to deploy my favorite tool combo: Sort + Unique:

If you sort properly your dataset, Unique tool will pick the first transaction from a series you configure, really easily, effectively aggregating your dataset by discarding the non relevant. The Alteryx engine will also process that operation with Sort+Unique much faster than the Summarize tool.

As a last note, if you remain stuck with Excel because you are not yet convinced you could boost your productivity and enrich your tasks, or because you can’t invest in an Alteryx license yet, do yourself a favor and make sure you are using the 64 bits (X64) version of Excel. If you are using the 32 bits, you are restricting Excel to access only 3 Gigs of your RAM, which is a pity when you manipulate data. the x64 has no such restrictions, and no downside besides the occasional lack of compatibility with a very outdated plug in. That is not the case of the Tableau Plug In which works fine on Excel 64. The license for Excel 32bits includes the x64 version, no extra cost. If you are unsure of the version you are running, go to File / Account and press the about Excel button, the top line will state whether it’s the 32 or 64 bits version.

If this post convinced you that your next step is to get started with Alteryx, and you are not sure where to start, here is some guidance:

https://insightsthroughdata.com/getting-started-with-alteryx-and-tableau/

Modern Excel does all this via Power Query.

I mostly agree. Yes you can Union using the Append funciton of PowerQuery, but I doubt you can reshape or generate rows easily. Furthermore, PowerQuery is an add-on which happens to be published by Microsoft, developed by a different division. It’s good to pull data, much more limited to shape it. There are tons of other tools that can overcome those issues. The purpose of this post is to show what Alteryx can do for Excel users, especially those who have not ventured in other tools. Also note that Alteryx is offering a connector for Power BI, which proves that Power Desktop is not yet up to snuff… But if you can prove me wrong, I’d happy to learn more…

I use PowerQuery specifically for reshaping data! The ability to easily unpivot, remove columns and filter rows is like no other tool I’ve used with a GUI. It’s only an add-in for earlier versions of Excel to be included in the fun as it comes standard with Excel 2016 (re-branded as Get & Transform). Since this post is aimed at Excel users I would be much more inclined to keep all my data within Excel using Get & Transform and PowerPivot than resort to external add-ins. Just my preference!

True, PowerQuery can do some of those transformations, but overall it is quite limited, for the scenarii 5 and 9 for instance.. When I was using it, I felt the formulae were too limited without resorting to DAX, which is hardcore for non coders…

Scenario 5: use Left Outer Join in PQ to identify non-matching items – http://exceleratorbi.com.au/power-query-as-an-audit-tool/

Scenario 9: I wouldn’t advise increasing (inventing) granularity; get better data.

I know this is an old post. I am currently on an Alteryx training course.

Scenario 9: is now possible in power query with the “unpivot columns” function. Having said that, it is quicker and easier to complete the same task in Alteryx than power query.

Hi Frederic,

I realize that this thread is old – I ended up here while searching for reviews of Alteryx vs Power BI.

Scenario 9 is very simple to do in Power BI or Power Query via list generator functions (e.g. List.Numbers(), List.Dates(), List.Generate()). I looked at the Generate Rows tool in Alteryx. For the first example, it’s a simple case of using List.Numbers. I brought the second example into Power Query as a table, and got the required result in less than a minute. Both examples are trivial for Power Query.

In fact, all the scenarios are so trivial that they can be done via the Power Query UI. Harder transformations (some of which Alteryx probably can’t handle), can be done using Power Query’s M language.

Hmm, having to learn a new language huh? I’d rather drag & drop, but that’s just me…

Good to know about those List.* functions, I am not as advanced with the new tool set that augments Excel. Thanks for contributing…

The main difference between Power Query and alteryx is that Alteryx is not scriptable. For people with zero programming, alteryx is great. But if someone has programming background, Power Query can do these things you describe with mostly GUI and a little M. In other words, alteryx is superior in getting non programmers started while Power Query is also good for non programmers but really shines if you can program. Of course, the other difference is the price. Depending on your use case, Power Query can save a business tens of thousands of dollars per year.

Pingback: 10 Tricks to adopt Redshift In-DB | Insights Through Data